In this post I’ll describe how to combine the power of Qt and OpenCV to develop a good looking and fun object detector. The method explained here contains quite a few things to learn and use in your current and future projects, so let’s get started.

What you will learn

If you carefully go through the instructions and code samples provided in this post, you’ll learn:

- How to use OpenCV with Qt (How to include OpenCV libraries in a Qt Project)

- How to use Qt C++ classes in QML code

- How to access camera using QML Camera type

- How to subclass QAbstractVideoFilter and QVideoFilterRunnable classes to process QVideo frame objects using OpenCV

- How to convert QVideoFrame to QImage

- How to convert QImage to OpenCV Mat

- How to detect objects using cascade classifiers

and many more …

What is needed

Obviously you need to have Qt Framework and OpenCV installed on your computer. You can use the latest versions of both of them, but just as a reference, I’ll be using Qt 5.10 and OpenCV 3.3.1.

How it is done

First of all, you need to create a Qt Quick application project. This type of project template in Qt Creator allows you to to create a QML based project that can be expanded using Qt C++ classes.

Add OpenCV and Qt Multimedia to your project

You must add Qt Multimedia module and OpenCV libraries to your project. Since I’m on windows operating system I’m using the following lines in my project *.pro file to add OpenCV libraries:

INCLUDEPATH += C:/path_to_opencv/include

LIBS += -LC:/path_to_opencv/lib

Debug : {

LIBS += -lopencv_core331d \

-lopencv_imgproc331d \

-lopencv_imgcodecs331d \

-lopencv_videoio331d \

-lopencv_flann331d \

-lopencv_highgui331d \

-lopencv_features2d331d \

-lopencv_photo331d \

-lopencv_video331d \

-lopencv_calib3d331d \

-lopencv_objdetect331d \

-lopencv_videostab331d \

-lopencv_shape331d \

-lopencv_stitching331d \

-lopencv_superres331d \

-lopencv_dnn331d \

-lopencv_ml331d

}

Release: {

LIBS += -lopencv_core331 \

-lopencv_imgproc331 \

-lopencv_imgcodecs331 \

-lopencv_videoio331 \

-lopencv_flann331 \

-lopencv_highgui331 \

-lopencv_features2d331 \

-lopencv_photo331 \

-lopencv_video331 \

-lopencv_calib3d331 \

-lopencv_objdetect331 \

-lopencv_videostab331 \

-lopencv_shape331 \

-lopencv_stitching331 \

-lopencv_superres331 \

-lopencv_dnn331 \

-lopencv_ml331

}Needless to say, you need to replace the paths with the ones on your computer. You also need to add OpenCV bin folder to the PATH environment variable, otherwise OpenCV DLL files won’t be visible to your app and it will crash as soon as you start it.

As for adding Qt Multimedia module, use the following line in your *.pro file:

QT += multimedia

Processing Video Frames Grabbed by QML Camera

We’ll be using QML Camera type later on to access and read video frames from the camera. In Qt framework, this is done by subclassing QAbstractVideFilter and QVideoFilterRunnable classes, as seen here:

class QCvDetectFilter : public QAbstractVideoFilter

{

Q_OBJECT

public:

QVideoFilterRunnable* createFilterRunnable();

signals:

void objectDetected(float x, float y, float w, float h);

public slots:

};

class QCvDetectFilterRunnable : public QVideoFilterRunnable

{

public:

QCvDetectFilterRunnable(QCvDetectFilter* creator) { filter = creator; }

QVideoFrame run(QVideoFrame* input, const QVideoSurfaceFormat& surfaceFormat, RunFlags flags);

private:

void dft(cv::InputArray input, cv::OutputArray output);

QCvDetectFilter* filter;

};The implementation code for createFilterRunnable is quite easy, as seen here:

QVideoFilterRunnable* QCvDetectFilter::createFilterRunnable()

{

return new QCvDetectFilterRunnable(this);

}For the run function though, we need to take care of quite a few things, staring with converting QVideoFrame to QImage and then to OpenCV Mat, as seen here:

QVideoFrame QCvDetectFilterRunnable::run(QVideoFrame* input, const QVideoSurfaceFormat& surfaceFormat, RunFlags flags)

{

Q_UNUSED(flags);

input->map(QAbstractVideoBuffer::ReadOnly);

if (surfaceFormat.handleType() == QAbstractVideoBuffer::NoHandle)

{

QImage image(input->bits(),

input->width(),

input->height(),

QVideoFrame::imageFormatFromPixelFormat(input->pixelFormat()));

image = image.convertToFormat(QImage::Format_RGB888);

cv::Mat mat(image.height(),

image.width(),

CV_8UC3,

image.bits(),

image.bytesPerLine());

// The image processing will happen here ...

}

else

{

qDebug() << "Other surface formats are not supported yet!";

}

input->unmap();

return *input;

}The image processing part, and namely the actual detection part of the code starts with a flip, to make sure the data is not reversed at it is when converting from QVideoFrame to Mat:

cv::flip(mat, mat, 0);The cascade classifier XML (in this case a face classifier) embedded into the executable is then converted into a temporary file and loaded. This is because OpenCV will not be able to load the classifier as it can’t access Qt resource system by default:

QFile xml(":/faceclassifier.xml");

if (xml.open(QFile::ReadOnly | QFile::Text))

{

QTemporaryFile temp;

if (temp.open())

{

temp.write(xml.readAll());

temp.close();

if (classifier.load(temp.fileName().toStdString()))

{

qDebug() << "Successfully loaded classifier!";

}

else

{

qDebug() << "Could not load classifier.";

}

}

else

{

qDebug() << "Can't open temp file.";

}

}

else

{

qDebug() << "Can't open XML.";

}Note that this doesn’t need to be done at each and every frame, since that will make things quite slow. Detection is a time taking process so do this only at the first frame, or as it is done in our example code, only when the classifier is empty, as seen here. If the classifier is not empty, proceed with the detection code:

if (classifier.empty())

{

// Load the classifier

}

else

{

std::vector<cv::Rect> detected;

/*

* Resize in not mandatory but it can speed up things quite a lot!

*/

QSize resized = image.size().scaled(320, 240, Qt::KeepAspectRatio);

cv::resize(mat, mat, cv::Size(resized.width(), resized.height()));

classifier.detectMultiScale(mat, detected, 1.1);

// We'll use only the first detection to make sure things are not slow on the qml side

if (detected.size() > 0)

{

// Normalize x,y,w,h to values between 0..1 and send them to UI

emit filter->objectDetected(float(detected[0].x) / float(mat.cols),

float(detected[0].y) / float(mat.rows),

float(detected[0].width) / float(mat.cols),

float(detected[0].height) / float(mat.rows));

}

else

{

emit filter->objectDetected(0.0,

0.0,

0.0,

0.0);

}

}Obviously the classifier is defined globally in our source codes, like this:

cv::CascadeClassifier classifier;

Using Qt C++ classes in QML code

This is done by first registering the class using qmlRegisterType function and then importing it into our QML code. Something like this in your main.cpp file:

qmlRegisterType<QCvDetectFilter>("com.amin.classes", 1, 0, "CvDetectFilter");Then you can use the following at the top of your QML fileto import CvDetectFilter which is now a known QML type:

import com.amin.classes 1.0Following that, we can have the next code in our QML file to define and use CvDetectFilter, and to effectively respond to detection and missed detection:

CvDetectFilter

{

id: testFilter

onObjectDetected :

{

if ((w == 0) || (h == 0))

{

// Not detected

}

else

{

// detected

}

}

}Defining the Camera and setting filters (in VideoOutput) is done as seen here:

Camera

{

id: camera

}

VideoOutput

{

id: video

source : camera

autoOrientation : false

filters : [testFilter]

Image

{

id: smile

source : "qrc:/smile.png"

visible : false

}

}So, you can use the following code in onObjectDetected function of CvDetectFilter to draw the smiley on the detected face:

onObjectDetected:

{

if ((w == 0) || (h == 0))

{

smile.visible = false;

}

else

{

var r = video.mapNormalizedRectToItem(Qt.rect(x, y, w, h));

smile.x = r.x;

smile.y = r.y;

smile.width = r.width;

smile.height = r.height;

smile.visible = true;

}

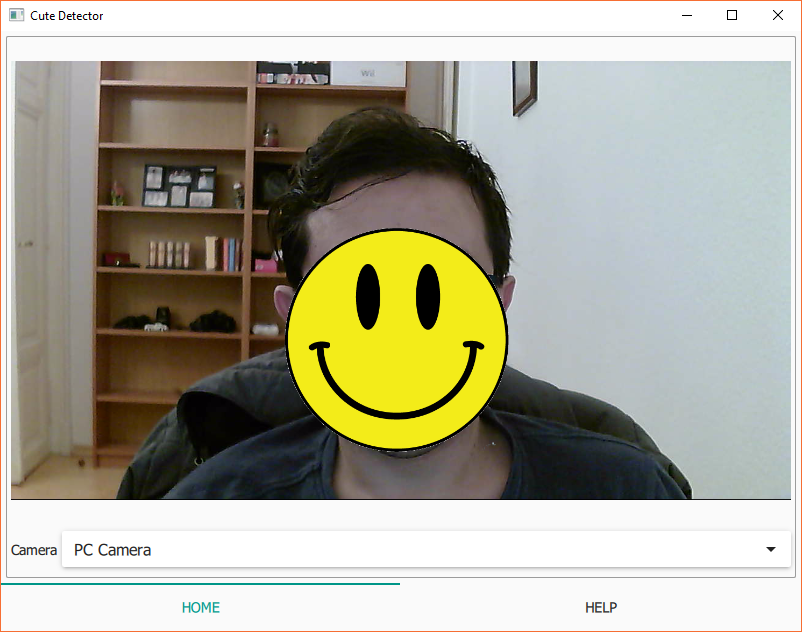

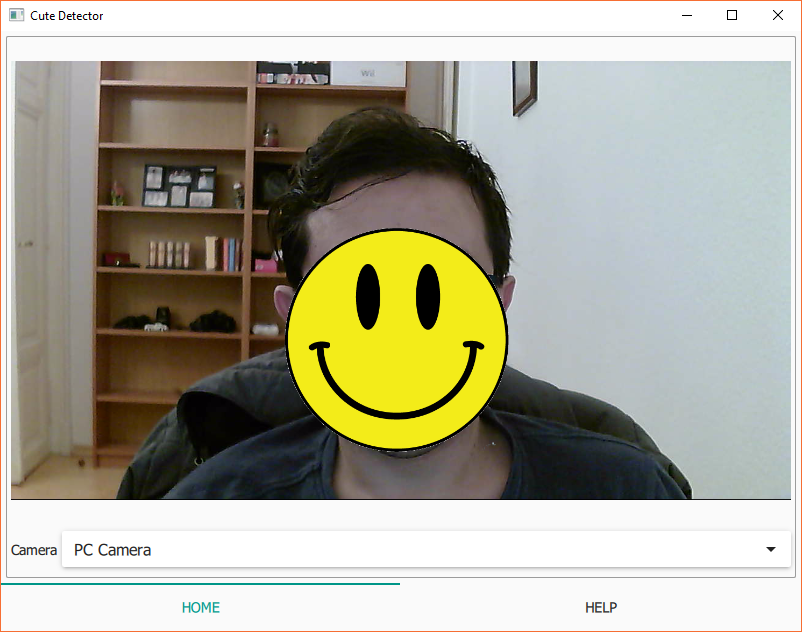

}Here’s how it looked like when I was playing around with this example. You can get the source codes for this down below:

Where to get the source codes

You can use the following link to get the source codes for CuteDetector project, that contains all of what is explained in this post. Just replace the include(path/opencv.pri) line with the OpenCV include lines mentioned here and you’ll be fine:

hi

cant you help me in OCR realtime in qt for android?

if you can, please help me.

i very try in net but any sample in net is for android studio.

hi

thank you for tutorial

are you have a example for OCR in Qt for Android?

real time

like this when video of camera showing image

and when touch screen without stop in image show

process OCR Started.

use opencv and tess-tow for OCR in Qt for android

thaks for your answer.

You can search my website for OCR related articles. If there are any you can use them, otherwise, hopefully, I’ll write about it sometime in the future.

hi mr, amin .tnx for tutorial

my project build without error.

but i have problem in running in android emulator and my android device.

when i runing project in android emulator give me this error ,”Other surface formats are not supported yet!”

what i do solve this problem?

so

i want make a button and

when i clicked button start processing

what doing?

my work very Hurry

please help me very fast.

thanx.

opencv version 2.4.10

Qt 5.9

Well, the error is pretty obvious. On the specific Qt version you’re using, “Other surface formats are not supported”, which is your case means Android related incompatibility.

Have you tried with more recent versions of Qt?

how add traineddata file of OCR in Qt project for android?

so much try and search.

please help me.

Hi Amin,

I am facing the following error while running the above QML OpenCV object detection source on an Embedded Linux platform.

defaultServiceProvider::requestService(): no service found for – “org.qt-project.qt.camera”

Kindly help me on how to solve the above-mentioned error.

Regards,

Manikandan.R

What version of Qt are you using? Looks like camera service not supported for the specific combination of Qt and operating system you are using.

Firstly, thanks for this very informative post.

Quick question for you, do you feel like there are any particular advantages with going with QCamera instead of the OpenCV VideoCapture device for acquiring frames from a camera? In previous projects I usually go with placing the frame acquisition part of opencv in a separate thread so it doesn’t block other portions of the program and I fill a thread safe buffer with frames as they are acquired. This then can be sent to other threads for image processing and/or sent to an openGL window for displaying in a GUI.

I ask because I am starting a new project using QT Quick for the first time and am deciding between QCamera and OpenCV for camera acquisition. Thanks!

On Desktop platforms you’re going to have a much easier time using OpenCV’s VideoCapture. In fact the way you described you’ve been doing it in the past sound ideal!

Using QCamera might help a bit on Android with NDK development since OpenCV’s VideoCapture won’t be an option there.

Thanks for the input Amin.

By the way, I just got your OpenCV/QT book this week. Super helpful!

Glad you found it helpful 🙂

hey amin, thanks for the good tutorial,

im running on qt 9.5.8 and opencv 3.4 but as soon as build and run the project, it crashes as soon as the camera opened, any tips

I think Qt 9.5.8 was a mistype since there’s no such version but it doesn’t make much of a difference anyway so let’s skip that.

Have you copied all the necessary DLLs to your build folder? Or do you have the path to those DLLs in PATH environment variable? (assuming you are using Windows)

Hi,

it can be possible to download the project?

Regards

Of course, feel free to download and use it.

Can you send the download link please

Read the post carefully, it’s right at the end. Here is the same link already shared in the post:

https://bitbucket.org/amahta/cutedetector/src/master/

Hi Amin.

Thank you for this tutorial.

I have a little problem here whereby it says

Cannot read C:/opencv/build/include: Access is denied.

and in my project file, I have included the path just like this

# Default rules for deployment.

qnx: target.path = /tmp/$${TARGET}/bin

else: unix:!android: target.path = /opt/$${TARGET}/bin

!isEmpty(target.path): INSTALLS += target

win32:CONFIG(release, debug|release): LIBS += -L$$PWD/../../../../opencv/build/x64/vc15/lib/ -lopencv_world400

else:win32:CONFIG(debug, debug|release): LIBS += -L$$PWD/../../../../opencv/build/x64/vc15/lib/ -lopencv_world400d

win32: {

include(C:\opencv\build\include)

}

INCLUDEPATH += $$PWD/../../../../opencv/build/include

DEPENDPATH += $$PWD/../../../../opencv/build/include

LIBS += -LC:/opencv/build/lib

Debug: {

LIBS += -lopencv_core400d \

-lopencv_imgproc400d \

-lopencv_imgcodecs400d \

-lopencv_videoio400d \

-lopencv_flann400d \

-lopencv_highgui400d \

-lopencv_features2d400d \

-lopencv_photo400d \

-lopencv_video400d \

-lopencv_calib3d400d \

-lopencv_objdetect400d \

-lopencv_videostab400d \

-lopencv_shape400d \

-lopencv_stitching400d \

-lopencv_superres400d \

-lopencv_dnn400d \

-lopencv_ml400d

}

Release: {

LIBS += -lopencv_core400 \

-lopencv_imgproc400 \

-lopencv_imgcodecs400 \

-lopencv_videoio400 \

-lopencv_flann400 \

-lopencv_highgui400 \

-lopencv_features2d400 \

-lopencv_photo400 \

-lopencv_video400 \

-lopencv_calib3d400 \

-lopencv_objdetect400 \

-lopencv_videostab400 \

-lopencv_shape400 \

-lopencv_stitching400 \

-lopencv_superres400 \

-lopencv_dnn400 \

-lopencv_ml400

}

Can I know what is the problem? Thanks Amin and have a nice day!

Is that the install folder? (C:/opencv/build)

Btw, make sure your user has access to that folder.

Hi amin.

Thank you for your good tutorials.

I downloaded this tutorial source code and configured it in my Qt.

I use qt 5.12 and OpenCv 4.

When i run and print input->width() in console, i have 1280.

But when i print image.width I have 0 columns and 0 rows .

It means that can’t convert from QVideoFrame input object to Qimage object and

program crashes with this error :

OpenCV(4.1.0-pre) /home/piltan/opencv/modules/imgproc/src/resize.cpp:3718: error: (-215:Assertion failed) !ssize.empty() in function ‘resize

what is problem?

thank you.

hi mr amin,

there is a bug in my output.

when i run bellow code, image will be empty

QImage image(input->bits(),

input->width(),

input->height(),

QVideoFrame::imageFormatFromPixelFormat(input->pixelFormat()));

please give me a solution. thank you

What is the value of input->handleType() you’re getting?

And what is the operating system you are trying this on?

I have same problem and commented it for you.

value of the input->handleType is 0 for me and my os is linux ubuntu.

what is problem??

With handle type zero you should be able to convert your video frame to image, unless your video has a non-standard format. What value do you get for imageFormatFromPixelFormat??? see here: https://doc.qt.io/qt-5/qvideoframe.html#imageFormatFromPixelFormat

I ran into the same problem on Linux. The problem is that imageFormatFromPixelFormat returns 0 because the camera image is YUV (YUYV to be specific). My solution was to directly do the conversion in OpenCV instead of QT using the video input:

cv::Mat mat_yuv(input->height(), input->width(), CV_8UC2, input->bits(), input->bytesPerLine());

cv::Mat mat_bgr;

cv::cvtColor(mat_yuv, mat_bgr, cv::COLOR_YUV2BGR_YUYV);

Then process mat_bgr as is (it has the right orientation, so no need to flip, as well as the right pixel order for OpenCV, BGR)

Thanks for sharing this.

Thanks very much for sharing …. I just have a little build issue. Appreciate if you can comment … thank

:-1: error: qcvdetectfilter.o: undefined reference to symbol ‘_ZN2cv6resizeERKNS_11_InputArrayERKNS_12_OutputArrayENS_5Size_IiEEddi’

/home/mike/opencv-4.0.1/build/lib/libopencv_imgproc.so.4.0:-1: error: error adding symbols: DSO missing from command line

below please find my cutedetector.pro file.

Regards

QT += quick multimedia

CONFIG += c++11

# The following define makes your compiler emit warnings if you use

# any feature of Qt which as been marked deprecated (the exact warnings

# depend on your compiler). Please consult the documentation of the

# deprecated API in order to know how to port your code away from it.

DEFINES += QT_DEPRECATED_WARNINGS

# You can also make your code fail to compile if you use deprecated APIs.

# In order to do so, uncomment the following line.

# You can also select to disable deprecated APIs only up to a certain version of Qt.

#DEFINES += QT_DISABLE_DEPRECATED_BEFORE=0x060000 # disables all the APIs deprecated before Qt 6.0.0

INCLUDEPATH += /home/mike/opencv-4.0.1/build/include

LIBS += -L/home/mike/opencv-4.0.1/build/lib -lopencv_core -lopencv_imgcodecs -lopencv_highgui -lopencv_objdetect

HEADERS += \

qcvdetectfilter.h

SOURCES += main.cpp \

qcvdetectfilter.cpp

RESOURCES += qml.qrc

# Additional import path used to resolve QML modules in Qt Creator’s code model

QML_IMPORT_PATH =

# Additional import path used to resolve QML modules just for Qt Quick Designer

QML_DESIGNER_IMPORT_PATH =

# Default rules for deployment.

qnx: target.path = /tmp/$${TARGET}/bin

else: unix:!android: target.path = /opt/$${TARGET}/bin

!isEmpty(target.path): INSTALLS += target

win32: {

include(D:/dev/cv/opencv-3.3.1/opencv.pri)

}

Are you trying to build for Android? or is it just Linux? You either have missing dependencies or corrupted dependencies.

You are right Amin, I will look into that project again. The reason I wanted that sourcs code is because I couldnt make it work :D. Im gonna look if I did any mistakes. It would be good for me If you sent the code but I would understand If you dont send it too. Thanks for all your effort. Lovin your work.

Thanks for understanding Ates, and I’ll make sure to speed up the process of sharing all my codes.

Hi Amin,

Thank you for your answer. I figured how to use android camera with QML from your http://amin-ahmadi.com/2017/02/10/how-to-use-cqtqmlopencv-to-write-mobile-applications/ article, then added face detection to it. I didn’t need to upgrade my android version. By the way, can you send me the source codes for this arcticle: http://amin-ahmadi.com/image-transformer/ ? Im trying to use Android camera with C++ but cant get it working. It would be very good for me to have a sample that i can look.

Thanks a lot for your help.

Hi Ates,

I’m hoping to make all my projects open-source as soon as possible, including Image Transformer project, but that might take a bit of time.

However, if you are looking for combining Java and C++ code to be able to use Android capabilities directly (such as the camera), here is an example for you:

http://amin-ahmadi.com/2016/06/18/how-to-access-android-camera-using-qtc/

It’s a bit old and I’ve tried to add updates as much as I can, but you might need to rework it a little bit before it’s completely useful for your case.

Hello Amin,

Im getting the “Other surface formats are not supported yet!” message when i try to run this app on an x86 5.0 (API 21) android emulator. Can you tell me why does this happen? My code comlies and runs without problems but doesnt do the face detection.

Hi Ates,

The error you are getting and the reason why your face detection is not working are not related. Can you tell me what version of Qt you are using to build this project? Can you also try running on a higher Android API version? (such as API 22, or even higher if you can) and let me know if this helps or not?!

Oh my mistake, one libs has been forget, working fine sorry

It’s okay.

One small tip though, “Unresolved External Symbol” almost literally means missing libs.

hello, trying to run this project with QT 5.11 and openCv 3.4.2, I received this error message, could you help me?

qcvdetectfilter.obj:-1: erreur : LNK2019: unresolved external symbol “public: __thiscall cv::CascadeClassifier::CascadeClassifier(void)” (??0CascadeClassifier@cv@@QAE@XZ) referenced in function “void __cdecl `dynamic initializer for ‘classifier”(void)” (??__Eclassifier@@YAXXZ)

qcvdetectfilter.obj:-1: erreur : LNK2019: unresolved external symbol “public: __thiscall cv::CascadeClassifier::~CascadeClassifier(void)” (??1CascadeClassifier@cv@@QAE@XZ) referenced in function “void __cdecl `dynamic atexit destructor for ‘classifier”(void)” (??__Fclassifier@@YAXXZ)

qcvdetectfilter.obj:-1: erreur : LNK2019: unresolved external symbol “public: bool __thiscall cv::CascadeClassifier::empty(void)const ” (?empty@CascadeClassifier@cv@@QBE_NXZ) referenced in function “public: virtual class QVideoFrame __thiscall QCvDetectFilterRunnable::run(class QVideoFrame *,class QVideoSurfaceFormat const &,class QFlags)” (?run@QCvDetectFilterRunnable@@UAE?AVQVideoFrame@@PAV2@ABVQVideoSurfaceFormat@@V?$QFlags@W4RunFlag@QVideoFilterRunnable@@@@@Z)

qcvdetectfilter.obj:-1: erreur : LNK2019: unresolved external symbol “public: bool __thiscall cv::CascadeClassifier::load(class cv::String const &)” (?load@CascadeClassifier@cv@@QAE_NABVString@2@@Z) referenced in function “public: virtual class QVideoFrame __thiscall QCvDetectFilterRunnable::run(class QVideoFrame *,class QVideoSurfaceFormat const &,class QFlags)” (?run@QCvDetectFilterRunnable@@UAE?AVQVideoFrame@@PAV2@ABVQVideoSurfaceFormat@@V?$QFlags@W4RunFlag@QVideoFilterRunnable@@@@@Z)

qcvdetectfilter.obj:-1: erreur : LNK2019: unresolved external symbol “public: void __thiscall cv::CascadeClassifier::detectMultiScale(class cv::_InputArray const &,class std::vector<class cv::Rect_,class std::allocator<class cv::Rect_ > > &,double,int,int,class cv::Size_,class cv::Size_)” (?detectMultiScale@CascadeClassifier@cv@@QAEXABV_InputArray@2@AAV?$vector@V?$Rect_@H@cv@@V?$allocator@V?$Rect_@H@cv@@@std@@@std@@NHHV?$Size_@H@2@2@Z) referenced in function “public: virtual class QVideoFrame __thiscall QCvDetectFilterRunnable::run(class QVideoFrame *,class QVideoSurfaceFormat const &,class QFlags)” (?run@QCvDetectFilterRunnable@@UAE?AVQVideoFrame@@PAV2@ABVQVideoSurfaceFormat@@V?$QFlags@W4RunFlag@QVideoFilterRunnable@@@@@Z)

release\CuteDetector.exe:-1: erreur : LNK1120: 5 unresolved externals

very helpful!thanks!

wait for your new book

Just perfect. thanks

Glad to be of help 🙂